Increase conversion rates – through personalization

There are many ways to increase the conversion rate in your online shop. We will show you the options available to you using various live examples.

What does your target audience really like? Which elements are guaranteed to get clicks? A/B testing is the key to finding out exactly that. With the help of tools, you can easily test two versions of a landing page on your website or in an email against each other and gradually work your way toward the perfect landing page or email. Here you can learn how A/B testing works in e-commerce and what you need to pay attention to in order to get meaningful results.

Here's what you can expect to find in this blog article:

Why are A/B tests suitable for the e-commerce industry?

What advantages do A/B tests offer you?

What can you check with A/B testing?

Which statistical approach is suitable for conducting an A/B test?

How do you conduct an A/B test?

1. Identify conversion barriers and opportunities

2. Formulate a suitable hypothesis

3. Implement the hypothesis and run the test

4. Collect data and document tests

5. Analyze the data

You should avoid these 4 common mistakes when conducting A/B tests

1. Too many changes at once

2. Sample size too small

3. Confusion among existing customers

4. Test period too short

What are the challenges of A/B testing?

Conclusion: A/B testing is the key to efficient marketing in e-commerce.

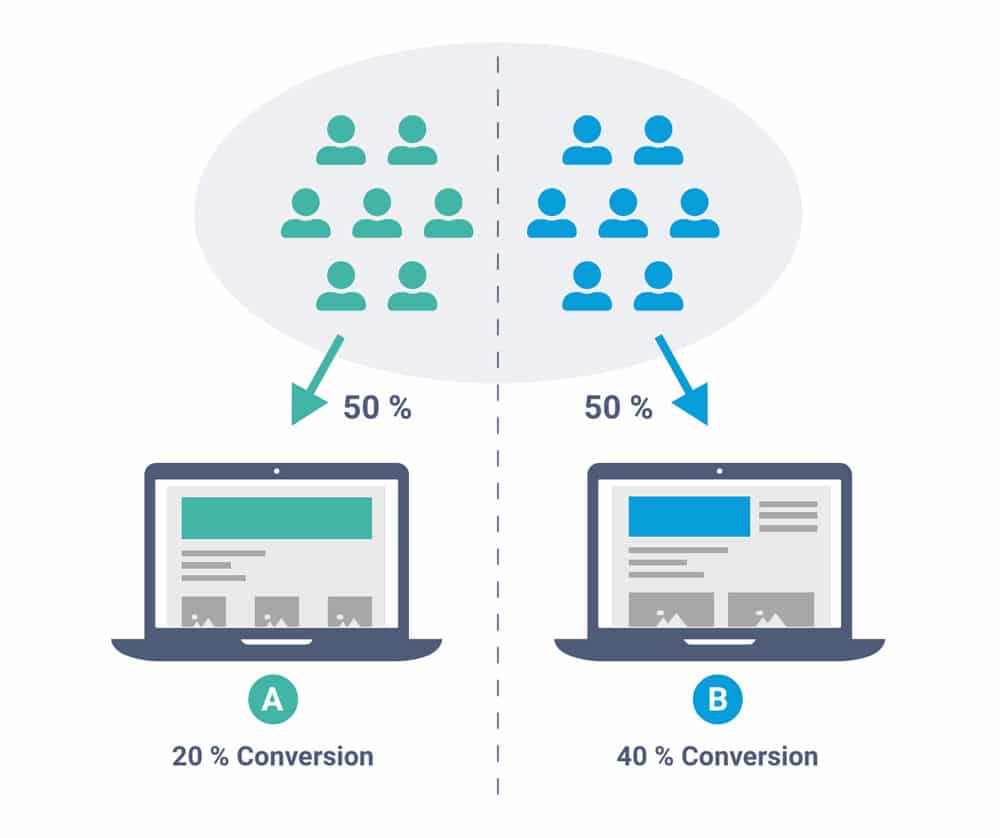

In A/B testing in e-commerce , you compare two versions of a landing page on your website or an email to find out which version is more popular with your target audience. You show the original version to some visitors and a slightly modified version to others. You then compare which version has the better KPIs. This allows you to continuously optimize landing pages or emails and increase their conversion rate.

Graphical representation of an A/B test including results, in which group B performs significantly better.

A/B testing is ideal for online shops and should definitely have a permanent place in the marketing team's toolbox. This is because it allows you to significantly increase the return on many online marketing campaigns in e-commerce with relatively little effort. Which wording convinces new customers on the landing page? How should the page be structured? What small changes in design can make the newsletter even more appealing to the target group? These are all questions that A/B testing can provide well-founded answers to.

A/B testing can significantly increase the efficiency of your marketing campaigns. The greatest strength of a well-planned A/B test is its objectivity. You can use data to make arguments and optimize elements. This often allows you to identify and discard false assumptions.

Stay up to date on personalization: Sign up for the epoq newsletter. Register now!

If you know how to address your target group in such a way that they respond positively, you have a competitive advantage in e-commerce. Your advertising campaigns will pay off faster and you can strengthen customer loyalty. The technical implementation is also easy and inexpensive thanks to various tools such as Optimizely, AB Tasty, or VWO.

A/B testing can, in principle, be carried out on all elements of a website, email, or advertisement. Elements where even small changes can have a significant impact include:

In A/B testing, the following variants are often distinguished:

In principle, all two-sample hypothesis tests can be considered. Which one is most suitable depends on the specific application. Most tools integrate statistical tests on different bases and evaluate them automatically in a way that even laypeople can understand.

One of the most commonly used tests is the chi-square test. This allows you to check whether the change leads to statistically significant better results. Scientists usually use a significance level of 5%. This prevents you from considering hypotheses to be true even though the change probably has no real impact on your success.

Another option is the Bayesian test, which is deductive and therefore extrapolates the result using probabilities. One advantage is that it allows poor variants to be quickly eliminated. However, this makes false conclusions more likely.

Follow these five steps to successfully complete your first A/B test:

A low click-through rate, a high bounce rate, or other online marketing KPIs are good indicators of potential for improvement. It is helpful to acquire some background knowledge of sales psychology. This will enable you to identify elements that could be improved more quickly and give you an idea of how to optimize them.

A hypothesis always has the following characteristics: it is potentially falsifiable, verifiable, and consists of a conditional clause —the if-then clause in particular has proven itself in marketing. An example would be "An image of a blonde woman leads to a better conversion rate than an image of a dark-haired man."

Once you have your hypothesis, you can get started and implement the change. A/B testing tools are often a big help here, as they frequently work with WYSIWYG editors ("What you see is what you get"). This means you can preview the changes beforehand and make sure that the display works. Either way, a small test run helps to ensure that you don't accidentally display the wrong pages or changes and that your visitors are distributed across the two variants as desired.

Tip: If it makes sense, you should always test changes on a page with high traffic. That's where you'll get the best data quality the fastest.

Now it's time to wait and collect data. You should collect a five-digit number of data points for both variants so that your results are meaningful. The number of conversions should be around 100 per version. Many tools will give you a reliability rate. It should be at least 95% before you finish the test. There is one exception: if there is no difference after a reasonable period of time, then the change is not significantly better or worse, and you should perform another test.

You should also keep a detailed record of what you changed, how you changed it, and the time periods from which the data points originate. This will allow you to check later whether there are any temporal correlations.

Once you have enough data, it's time to confirm or reject your hypothesis. In most statistical tests, the tested KPI must perform at least 5% better in one variant than in the other version. If this is not the case, the hypothesis, i.e., the new variant, must be rejected.

Be sure to avoid the following mistakes when conducting A/B testing in e-commerce.

If you change too much at once, you won't know which elements are contributing to your success. Therefore, focus on only a few changes in each test.

If you only change a small detail, your data set must be large enough to be meaningful. The size of the sample depends on various factors. However, as a rule of thumb, such a test is only considered sufficiently meaningful if it includes at least 10,000 data points. Calculation tools make it easy to find out exactly how many data points you need.

If you test too much, you will confuse your existing customers because you will reduce your recognition value. You can prevent this by initially only showing significant changes to new visitors or email subscribers.

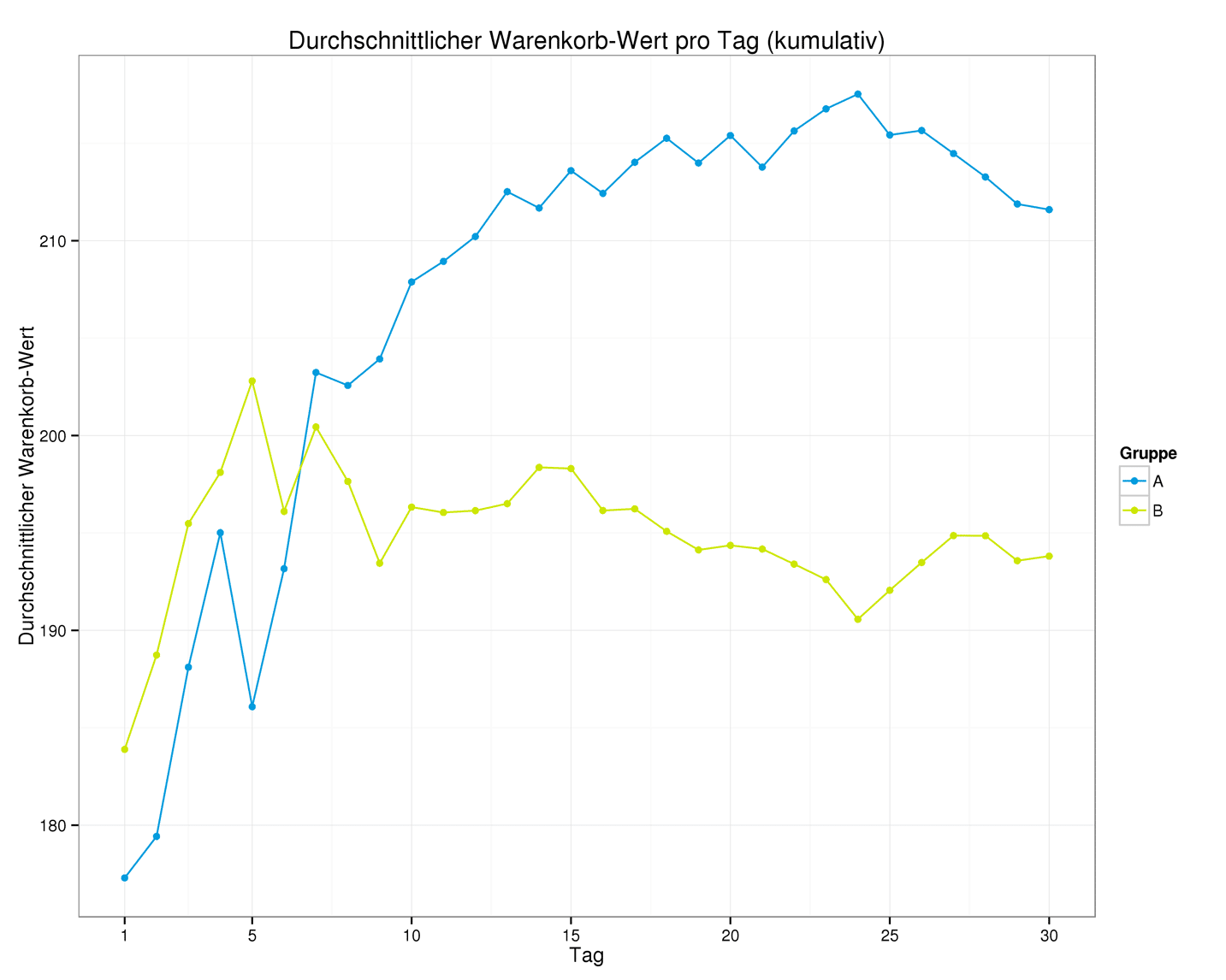

A sufficiently long period of time is important in order to obtain reliable data. Different times and days of the week often also have an impact on conversions. As a rule of thumb, you should test a landing page for around two weeks.

If you measure too quickly, you may not be able to identify the better option. Here, it only becomes clear after day 7.

There are two major challenges when it comes to A/B testing: First, formulating a meaningful hypothesis. It must be verifiable, and the intended change should also have a good chance of being better than the original version. While changing elements in unexpected ways can sometimes yield surprising insights, a targeted approach leads to more efficient optimization.

On the other hand, evaluating tests and interpreting data such as sales figures can sometimes be complicated and can lead to false conclusions. This is also true because individual elements may achieve an improvement in one context, but not necessarily in another. After all, the whole is more than the sum of its parts. A particular headline, in combination with a particular image and a CTA, may appeal particularly well to the target audience. A CTA that has been proven to convert better in a test conducted some time ago will therefore not automatically work better in this context.

Stay up to date on personalization: Sign up for the epoq newsletter. Register now!

This is exactly what can be tested with multivariate tests: you vary the headline and image respectively and test which combination works best.

A/B testing is a relatively easy-to-understand method that, thanks to a wide range of tools, is also easy to implement. It allows you to tailor your landing pages or emails to the preferences of your target group. This makes it an indispensable tool for optimizing your conversion rate in online marketing.

Would you like to learn more about how you can increase the conversion rate in your online shop?

Then schedule a web demo on the topic now!

Willy-Brandt-Straße 3

76275 Ettlingen

+49 (0)7243 2001-0

You are currently viewing placeholder content from HubSpot. To access the actual content, click the button below. Please note that doing so will result in data being shared with third-party providers.

More InformationYou are currently viewing placeholder content from HubSpot Meetings. To access the actual content, click the button below. Please note that doing so will result in data being shared with third-party providers.

More Information